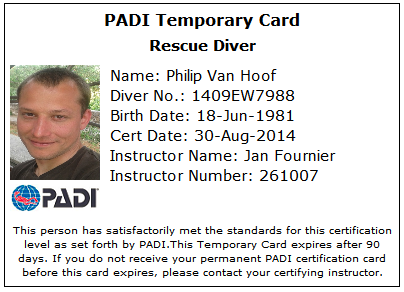

For this one I worked really hard. Buddy breading, relaxing people in panic at 20 meters deep, keeping yourself cool. And that in Belgian waters (no visibility and freezing cold). We simulated it all. It was harder than most other things I did in my life.

Category: english

Let’s make things better

Matthew gets that developers need good equipment.

Glade, Scaffolding (DevStudio), Scintilla & GtkSourceView, Devhelp, gnome-build and Anjuta also got it earlier.

I think with GNOME’s focus on this and a bit less on woman outreach programs; this year we could make a difference.

Luckily our code is that good that it can be reused for what is relevant today.

It’s all about what we focus on.

Can we please now go back at making software?

ps. I’ve been diving in Croatia. Trogir. It was fantastic. I have some new reserves in my mental system.

ps. Although we’re very different I have a lot of respect for your point of view, Matthew.

Tracker supports volume management under a minimal environment

While Nemo Mobile OS doesn’t ship with udisks2 nor with the GLib/GIO GVfs2 modules that interact with it, we still wanted removable volume management working with the file indexer.

It means that types like GVolume and GVolumeMonitor in GLib’s GIO will fall back to GUnixVolume and GUnixVolumeMonitor using GUnixMount and GUnixMounts instead of using the more competent GVfs2 modules.

The GUnixMounts fallback uses the _PATH_MNTTAB, which generally points to /proc/mounts, to know what the mount points are.

Removable volumes usually aren’t configured in the /etc/fstab file, which would or could affect /proc/mounts, plus if you’d do it this way the UUID label can’t be known upfront (you don’t know which sdcard the user will insert). Tracker’s FS miner needs this label to uniquely identify a removable volume to know if a previously seen volume is returning.

If you look at gunixvolume.c’s g_unix_volume_get_identifier you’ll notice that it always returns NULL in case the UUID label isn’t set in the mtab file: the pure-Unix fall back implementations aren’t fit for non-typical desktop usage; it’s what udisks2 and GVfs2 normally provide for you. But we don’t have it on the Nemo Mobile OS.

The mount_add in miners/fs/tracker-storage.c luckily has an alternative that uses the mountpoint’s name (line ~592). We’ll use this facility to compensate for the lacking UUID.

Basically, we add the UUID of the device to the mountpoint’s directory name and Tracker’s existing volume management will generate a unique UUID using MD5 for each unique mountpoint directory. What follows is specific for Nemo Mobile and its systemd setup.

We added some udev rules to /etc/udev/rules.d/90-mount-sd.rules:

SUBSYSTEM=="block", KERNEL=="mmcblk1*", ACTION=="add", MODE="0660", TAG+="systemd",

ENV{SYSTEMD_WANTS}="mount-sd@%k.service", ENV{SYSTEMD_USER_WANTS}="tracker-miner-fs.service

tracker-store.service"

We added /etc/systemd/system/mount-sd@.service:

[Unit] Description=Handle sdcard After=init-done.service dev-%i.device BindsTo=dev-%i.device [Service] Type=oneshot RemainAfterExit=yes ExecStart=/usr/sbin/mount-sd.sh add %i ExecStop=/usr/sbin/mount-sd.sh remove %i

And we created mount-sd.sh:

if [ "$ACTION" = "add" ]; then

eval "$(/sbin/blkid -c /dev/null -o export /dev/$2)"

test -d $MNT/${UUID} || mkdir -p $MNT/${UUID}

chown $DEF_UID:$DEF_GID $MNT $MNT/${UUID}

touch $MNT/${UUID}

mount ${DEVNAME} $MNT/${UUID} -o $MOUNT_OPTS || /bin/rmdir $MNT/${UUID}

test -d $MNT/${UUID} && touch $MNT/${UUID}

else

DIR=$(mount | grep -w ${DEVNAME} | cut -d \ -f 3)

if [ -n "${DIR}" ] ; then

umount $DIR || umount -l $DIR

fi

fi

Now we just have to configure Tracker right:

gsettings set org.freedesktop.Tracker.Miner.Files index-removable-devices true

Let’s try that:

# Insert sdcard

[nemo@Jolla ~]$ mount | grep sdcard

/dev/mmcblk1 on /media/sdcard/F6D0-FC42 type vfat (rw,nosuid,nodev,noexec,...

[nemo@Jolla ~]$

[nemo@Jolla ~]$ touch /media/sdcard/F6D0-FC42/test.txt

[nemo@Jolla ~]$ tracker-sparql -q "select tracker:available(?s) nfo:fileName(?s) \

{ ?s nie:url 'file:///media/sdcard/F6D0-FC42/test.txt' }"

Results:

true, test.txt

# Take out the sdcard

[nemo@Jolla ~]$ mount | grep sdcard

[nemo@Jolla ~]$ tracker-sparql -q "select tracker:available(?s) nfo:fileName(?s) \

{ ?s nie:url 'file:///media/sdcard/F6D0-FC42/test.txt' }"

Results:

(null), test.txt

[nemo@Jolla ~]$

Abstractionitis

Realizing I’ll be wrong again in future, I think I’m finally cured of abstractionitis. Thanks to Miguel for the diagnose and especially Rob who continuously pointed me out that you can’t escape the responsibility of having to actually implement. Thanks guys.

FOSDEM presentation about Metadata Tracker

I will be doing a presentation about Tracker at FOSDEM this year.

Metadata Tracker is now being used not only on GNOME, the N900 and N9, but is also being used on the Jolla Phone. On top a software developer for several car brands, Pelagicore, claims to be using it with custom made ontologies; SerNet told us they are integrating Tracker for use as search engine backend for Apple OS X SMB clients and last year Tracker integration with Netatalk was done by NetAFP. Other hardware companies have approached the team about integrating the software with their products. In this presentation I’d like to highlight the difficulties those companies encountered and how the project deals with them, dependencies to get a minimal system up and running cleanly, recent things the upstream team is working on and I’d like to propose some future ideas.

Dnsmasq’s code quality

Had to adapt dnsmasq’s code today. My god, that tiny application has a shitty code quality. I now feel bad knowing that so many systems are depending on this stuff.

Mr. Dillon; smartphone innovation in Europe ought to be about people’s privacy

Dear Mark,

Your team and you yourself are working on the Jolla Phone. I’m sure that you guys are doing a great job and although I think you’ve been generating hype and vaporware until we can actually buy the damn thing, I entrust you with leading them.

As their leader you should, I would like to, allow them to provide us with all of the device’s source code and build environments of their projects so that we can have the exact same binaries. With exactly the same I mean that it should be possible to use MD5 checksums. I’m sure you know what that means and you and I know that your team knows how to provide geeks like me with this. I worked with some of them together during Nokia’s Harmattan and Fremantle and we both know that you can easily identify who can make this happen.

The reason why is simple: I want Europe to develop a secure phone similar to how, among other open source projects, the Linux kernel can be trusted. By peer review of the source code.

Kind regards,

A former Harmattan developer who worked on a component of the Nokia N9 that stores the vast majority of user’s privacy.

ps. I also think that you should reunite Europe’s finest software developers and secure the funds to make this workable. But that’s another discussion which I’m eager to help you with.

In agreement

Surprisingly I’m in agreement with what Richard has to say in this specific context.

Why do you need Tracker?

(Or why our project’s name wasn’t wrong after all)

First and foremost, because the Internet isn’t available everywhere all the times. To put it simple: 3G (and 4G) suck. The latency is a joke in even the most modern countries, even at the center of their capital cities. Reliable availability of The Internet simply doesn’t exist for most people. Not even after a decade of promises and billion dollar investments from advertising firms like Google. Balloons that bring The Internet everywhere? Yeah, sure.

I invite you to try a serious Google maps use-case in a Swiss tunnel. The kind of use-case the nineties Microsoft Automap Streets & Tips – like software easily managed for planning vacations and trips across Europe decades ago (without The Internet). Or how about reading the newspapers on the airplane? Technology of pre the year 1700 could do it. Today Google’s tablets & glasses can’t, because I have no reliable Internet (the flight-attendant will reliably give me a newspaper, though – go ahead, try it Sergey). And if Google installed 3G routers in those tunnels, airplanes, forests, all third world countries, seas and the truly remote areas of the planet , I could still come up with a lot more places. Everybody can. By the time the Googles of today are finished, we’ll all travel to Mars with latencies of up to an hour while Google’s data still only travels at the speed of light (that, or fix quantum entanglement to be workable and more importantly: scalable to billions of users).

It’s great that sometimes we can use Google maps, sure. But if I can’t rely on it always and everywhere, it means that in embedded: it doesn’t exist. I want my Boeing 747’s landing software to work (oh and when we land on Mars, too). Always. I want software to land us safely. Same for my car’s ABS, the subway and train’s breaking systems. I’ll probably be dead when those services don’t operate when I need them. During those moments I don’t care about Google fans and their Google HTML5 religion. Screw HTML5, JavaScript, WebSocket and 3G and thank you interrupt based real time kernels that open my airbag, stop the tram and land the airplane.

For the car industry it’s probably cheaper to provide a storage hardware upgrade when the car must be serviced, than it would be to sell your company’s soul to privacy invading Cloud hosting services. Because in future I would like you to provide Facebook-like services in my car, as reliable as my airbag works. Without the Internet being everywhere. I want you to deliver it to me when I’m visiting Mars. And my kids … who knows what they’ll want?!

Your embedded technology needs to provide graph data about the users’ activity to services that your business wants to share the data with. I’ll illustrate this with This-is-Possible-Today use cases:

– The fridge contains no more milk. While walking the street watching his smartphone, the user opens a recipe for a meal that requires milk. When the user is at the supermarket the technology that will in future be installed on supermarket shopping carts (or his glasses) needs to show the recipe, its ingredients and highlight the fact that the fridge doesn’t contain one of the required ingredients (milk, sugar, butter). And if the user allows this, advertise different brands of milk, sugar and butter based on who paid most plus his wife’s buying habits.

– Your kid talked with a school friend on Facebook about an amusement park (De Efteling! Phantasialand!). Your wife decides that because it’s good weather this weekend, the family should (will) go to an amusement park (yes, she’s the boss. And that’s fine: you own the car – don’t worry, she’ll drive the way back so you can have your weekend nap). So the entire family gets in the (your) car and you ask your son: what amusement park shall I drive to?! Your kid opens the infotainment system at the back seat of the car and sees what he has been interested in last few weeks. After privacy authorization (or not) you as the driver of the car sees the list on the dashboard infotainment system. You select it and the navigation software of the (your) car navigates to it. What a dad! Meanwhile your front passenger seat’s infotainment system goes to the ticket ordering website of the amusement park. What a mom! Advertising related to amusement parks and ticket vending is shown. Of course! Phantasialand!

That is why you need Tracker’s Nepomuk based storage with its SPARQL querying and updating capability.

It lets your embedded appliance do what Facebook does. But in a light way, isolated (or not) from the rest of the world. You decide what happens with the data and who receives it. Allowing you to provide a trust relationship with your customers and consumers. You are the industry providing those cars, fridges and TV sets.

As a BMW driver myself, I would stop buying BMW cars as soon as I learn that BMW sells my driving habits (or whatever) to Google or Facebook (or the NSA). Today I’d trust BMW to integrate those habits into the infotainment system of my next car. IF I can trust BMW. I think that in future it’ll be the difference between succeeding and failing as a industry.

With Tracker you get the use-cases and features. But it’s not for free: you must hire brains instead of paying Google or Facebook’s marketing boys. This comes as a surprise? It has always been that way in tech: Brains and hard work, innovate. Ask Wernher von Braun. They landed on the wrong planet, but in the sixties and seventies his rockets got us to the moon.

A car is a car. A fridge is a fridge. A TV set is a TV set. They shouldn’t be Google’s or Facebook’s data mining devices. Besides, why would you give the data away? Your appliances collected it, not theirs. Talk with your customers fairly and openly on how it can and how it can’t be used.

As managers at these industries it’s up to you to solve the crisis of social features vs. privacy mining.

Kind regards, from one of the guys who developed such technology for Nokia’s N9.

A use-case for SPARQL and Nepomuk

As I got contacted by two different companies last few days who both had questions about integrating Tracker into their device, I started thinking that perhaps I should illustrate what Tracker can already do today.

I’m going to make a demo for the public transportation industry in combination with contacts and places of interest. Tracker’s ontologies cross many domains, of course (this is just an example).

I agree that in principle what I’m showing here isn’t rocket science. You can do this with almost any database technology. What is interesting is that as soon as many domains start sharing the ontology and store their data in a shared way, interesting queries and use-cases are made possible.

So let’s first insert a place of interest: the Pizza Hut in Nossegem

tracker-sparql -uq "

INSERT { _:1 a nco:PostalAddress ; nco:country 'Belgium';

nco:streetAddress 'Weiveldlaan 259 Zaventem' ;

nco:postalcode '1930' .

_:2 a slo:Landmark; nie:title 'Pizza Hut Nossegem';

slo:location [ a slo:GeoLocation;

slo:latitude '50.869949'; slo:longitude '4.490477';

slo:postalAddress _:1 ];

slo:belongsToCategory slo:predefined-landmark-category-food-beverage }"

And let’s add some busstops:

tracker-sparql -uq "

INSERT { _:1 a nco:PostalAddress ; nco:country 'Belgium';

nco:streetAddress 'Leuvensesteenweg 544 Zaventem' ;

nco:postalcode '1930' .

_:2 a slo:Landmark; nie:title 'Busstop Sint-Martinusweg';

slo:location [ a slo:GeoLocation;

slo:latitude '50.87523'; slo:longitude '4.49426';

slo:postalAddress _:1 ];

slo:belongsToCategory slo:predefined-landmark-category-transport }"

tracker-sparql -uq "

INSERT { _:1 a nco:PostalAddress ; nco:country 'Belgium';

nco:streetAddress 'Leuvensesteenweg 550 Zaventem' ;

nco:postalcode '1930' .

_:2 a slo:Landmark; nie:title 'Busstop Hoge-Wei';

slo:location [ a slo:GeoLocation;

slo:latitude '50.875988'; slo:longitude '4.498208';

slo:postalAddress _:1 ];

slo:belongsToCategory slo:predefined-landmark-category-transport }"

tracker-sparql -uq "

INSERT { _:1 a nco:PostalAddress ; nco:country 'Belgium';

nco:streetAddress 'Guldensporenlei Turnhout' ;

nco:postalcode '2300' .

_:2 a slo:Landmark; nie:title 'Busstop Guldensporenlei';

slo:location [ a slo:GeoLocation;

slo:latitude '51.325463'; slo:longitude '4.938047';

slo:postalAddress _:1 ];

slo:belongsToCategory slo:predefined-landmark-category-transport }"

Let’s now get all the busstops nearby the Pizza Hut in Nossegem:

tracker-sparql -q " SELECT ?name ?lati ?long WHERE { ?p slo:belongsToCategory slo:predefined-landmark-category-food-beverage; slo:location [ slo:latitude ?plati; slo:longitude ?plong ] . ?b slo:belongsToCategory slo:predefined-landmark-category-transport ; slo:location [ slo:latitude ?lati; slo:longitude ?long ] ; nie:title ?name . FILTER (tracker:cartesian-distance (?lati, ?plati, ?long, ?plong) < 1000) }" Results: Busstop Sint-Martinusweg, 50.87523, 4.49426 Busstop Hoge-Wei, 50.875988, 4.498208

This of course was an example with only slo:Landmark. But that slo:location property can be placed on any nie:InformationElement. Meaning that for example a nco:PersonContact can also be involved in such a cartesian-distance query (which is of course just an example).

Let’s make an example use-case: We want contact details of friends (with publicized coordinates) who are nearby a slo:Landmark that is in a food and beverage landmark category, so that the messenger application can prepare a text message window where you’ll type that you want to get together to get lunch at the Pizza Hut.

Ok, so let’s add some nco:PersonContact to our SPARQL endpoint who are nearby the Pizza Hut:

tracker-sparql -uq "

INSERT { _:1 a nco:PersonContact ; nco:fullname 'John Carmack';

slo:location [ a slo:GeoLocation;

slo:latitude '51.325413'; slo:longitude '4.938037' ];

nco:hasEmailAddress [ a nco:EmailAddress;

nco:emailAddress 'john.carmack@somewhere.com'] }"

tracker-sparql -uq "

INSERT { _:1 a nco:PersonContact ; nco:fullname 'Greg Kroah-Hartman';

slo:location [ a slo:GeoLocation;

slo:latitude '51.325453'; slo:longitude '4.938027' ];

nco:hasEmailAddress [ a nco:EmailAddress;

nco:emailAddress 'greg.kroah@somewhere.com'] }"

And let’s add one person who isn’t nearby the Pizza Hut in Nossegem:

tracker-sparql -uq "

INSERT { _:1 a nco:PersonContact ; nco:fullname 'Jean Pierre';

slo:location [ a slo:GeoLocation;

slo:latitude '50.718091'; slo:longitude '4.880134' ];

nco:hasEmailAddress [ a nco:EmailAddress;

nco:emailAddress 'jean.pierre@somewhere.com'] }"

And now, the query:

tracker-sparql -q " SELECT ?name ?email ?lati ?long WHERE { ?p slo:belongsToCategory slo:predefined-landmark-category-food-beverage; slo:location [ slo:latitude ?plati; slo:longitude ?plong ] ; nie:title ?pname . ?b a nco:PersonContact; slo:location [ slo:latitude ?lati; slo:longitude ?long ] ; nco:fullname ?name ; nco:hasEmailAddress [ nco:emailAddress ?email ]. FILTER (tracker:cartesian-distance (?lati, ?plati, ?long, ?plong) < 10000) }" Results: Greg Kroah-Hartman, greg.kroah@somewhere.com, 50.874715, 4.49158 John Carmack, john.carmack@somewhere.com, 50.874715, 4.49154

These use-cases of course only illustrate the simplified location ontology in combination with the Nepomuk contacts ontology. There are many such domains in Nepomuk and when defining your own platform and/or a new domain on the desktop you can add (your own) ontologies. Mind that for the desktop you should preferably talk to Nepomuk first.

The strength of such a platform is also its weakness: if no information sources put their data into the SPARQL endpoint, no information sink can do queries that’ll yield meaningful results. You of course don’t have this problem in a contained environment where you define what does and what doesn’t get stored and where, like an embedded device.

A desktop like KDE or GNOME shouldn’t have this problem either, if only everybody would agree on the technology and share the ontologies. Which isn’t necessarily happening (fair point), although both KDE with Nepomuk-KDE and GNOME with Tracker share most of Nepomuk.

But indeed; if you don’t store anything in Tracker, it’s useless. That’s why Tracker comes with a file system miner and provides a framework for writing your own miners. The idea is that with time more and more applications will use Tracker, making it increasingly useful. Hopefully.

Bypassing Tracker’s file system miner, for example for MTP daemons

Recapping from my last blog article; I worked a bit on this concept during the weekend.

When a program is responsible for delivery of a file to the file system that program knows precisely when the rename syscall, completing the file transfer transaction, takes place.

An example of such a program is an MTP daemon. I quote from wikipedia: A main reason for using MTP rather than, for example, the USB mass-storage device class (MSC) is that the latter operates at the granularity of a mass storage device block (usually in practice, a FAT block), rather than at the logical file level.

One solution for metadata extraction for those files is to have file monitoring on the target storage directory with Tracker’s FS miner. The unfortunate thing with such a solution is that file monitoring will inevitably always trigger after the rename syscall. This means that only moments after the transfer has completed, the system can update the RDF storage. Not during and not just in time.

With this new feature I plan to allow a software like an MTP daemon to be ahead of that. For example while the file is being transferred or just in time upfront and / or just after the rename syscall depending on the use-case and how the developer plans to use the feature.

The API might still change. I plan to for example allow passing the value of tracker:available among other useful properties for which a MTP daemon might want to safely tamper with the values (edit: this is done and API in this blog article is adapted). The tracker:available property can be used to indicate to other software the availability of a file. For example while the file is being transferred you could set it to false and right after the rename you set it to true.

When you are building a device that has no other entry points for user files or documents than MTP, this feature helps you turning off Tracker’s FS miner completely. This could be ideal for certain tablets and phones.

Currently it looks like this. Branch is available here:

static void on_finished (GObject *none, GAsyncResult *result, gpointer user_data) { GMainLoop *loop = user_data; GError *error = NULL; gchar *sparql = tracker_extract_get_sparql_finish (result, &error); if (error == NULL) { g_print ("%s", sparql); g_free (sparql); } else g_error("%s", error->message); g_clear_error (&error); g_main_loop_quit (loop); } int main (int argc, char **argv) { const gchar *file = "/tmp/file.png"; const gchar *dest = "file:///home/pvanhoof/Documents/Photos/photo.png" const gchar *graph = "urn:mygraph" GMainLoop *loop; g_type_init(); loop = g_main_loop_new (NULL, FALSE); tracker_extract_get_sparql (file, dest, graph, time(0), time(0), TRUE, on_finished, loop); g_main_loop_run (loop); g_object_unref (loop); }

This will result in something like this:

INSERT SILENT { GRAPH <urn:mygraph> { _:file a nfo:FileDataObject , nie:InformationElement ; nfo:fileName "photo.png" ; nfo:fileSize 38155 ; nfo:fileLastModified "2012-12-17T09:20:18Z" ; nfo:fileLastAccessed "2012-12-17T09:20:18Z" ; nie:isStoredAs _:file ; nie:url "file:///home/pvanhoof/Documents/Photos/photo.png" ; nie:mimeType "image/png" ; a nfo:FileDataObject ; nie:dataSource <urn:nepomuk:datasource:9291a450-etc-etc> ; tracker:available true . _:file a nfo:Image , nmm:Photo ; nfo:width 150 ; nfo:height 192 ; nmm:dlnaProfile "PNG_LRG" ; # more extracted metadata nmm:dlnaMime "image/png" . } }

As usual with stuff that I blog about: this feature isn’t finished, it’s not in master yet, not even reviewed. The API might change. All the usual stuff.

Warming up

Hey former Harmattan peeps. How about we do a little bit of this Jolla stuff after our hours and see where it goes? You never know, and neither have any of the technologies and improvements that we did for Nokia harmed us. It’s at #jollamobile on FreeNode. Btw. Ping me if you are going to FOSDEM. Maybe we can discuss how we can revive some of our Harmattan projects? Personally, I’m thinking about reducing the role of Tracker’s FS miner in Jolla by first refactoring libtracker-extract and adapting buteo to call for metadata extraction instead of letting miner-fs pick the newly added files up. Dead to file system monitoring on phones!

At the same time I’m also working with Calligra a lot lately. Which is by the way awesome stuff. Can’t choose.

Morals? Forbidding stuff?

It isn’t freedom to have to choose for Richard Stallman’s world view. It isn’t ‘freedom’ to be called immoral just because you choose another ethic. It isn’t freedom when a single person or group with a single view on morality tries to forbid you something based on just their point of view.

For example, Stallman has repeatedly said about Trusted Computing (which he in a childish way apparently calls Treacherous Computing) that it ‘should be illegal’ (that’s a quote from official FSF and GNU pages). I also recall Stallman trying to forbid blog posts about proprietary software (it was about VMWare) on planet-gnome (original thread here).

Richard Stallman and some of his followers don’t seem to understand that it isn’t necessarily moral to impose your world view, about morality, on everybody else by claiming, for example, that the other’s view ‘should be illegal’ or ‘is immoral’ (these are terms that he and some of his followers frequently use).

Firstly something should be only illegal when all procedures for making a new law in a country have been followed. In most democratic countries that means getting a majority in parliament but also getting advise from your country’s judges and from experts in the field who’ll be affected by your new law. So not just by listening and following a guy like Richard Stallman blindly. This is why I was very much against a rule for planet-gnome to forbid posts about proprietary software that uses GNOME: nor the majority of GNOME foundation members nor all experts in the field who’d be affected by that new law nor all the maintainers of planet-gnome (its judges) followed Richard’s opinion.

In this new situation it also isn’t only Richard Stallman who should be blindly followed. Ubuntu needs to take into account all stakeholders and not just Stallman and his followers.

Secondly is morality defined by a person’s own views and for a huge part by that person’s culture. ‘How we ought to live’ is (also) a question at the individual level. Not per definition answered by Richard Stallman alone. Although, sure, it can be one’s choice to strictly copy Richard’s morals. Morality is not necessarily a single option nor is it necessarily written in a single book.

For me it’s not fine when your morality includes enforcing others to copy exactly your morals. To put it in a way that Richard’s strict followers might understand: for me morality isn’t like the GPL; agreeing to some of Stallman’s morals does not mean having to puristic copy them all.

Allowing local cults of personality in open source

Hey Aaron. I mostly agree with your post. I don’t fully agree, however, with “We needed Android because we couldn’t do it ourselves”:

Mostly Qt (and also KDE) developers, and some GNOME developers who where still left developing for Nokia since the N900 and earlier, made the Nokia N9 Swipe phone. Technically the product is a success; look at the N9’s reviews to verify that. Marketing-wise it’s sort of a failure due to, in my humble opinion, a CEO switch at the wrong time and because he didn’t have enough time to learn how good the phone actually was. But even without much marketing, the product is being sold as we speak.

I do agree if you mean with your blog post that for example the N9 happened thanks to local leadership. The leadership that made it happen was employed at Nokia though, and not really a person in either the Qt or the GNOME camp. Rather a group of passionate leadership-taking people at Nokia.

It might have contributed that these technical leaders didn’t see how strong they could have been together during the CEO switch, at the time when Ari Jaaksi left Nokia as soon as Stephen Elop’s plans became clear. I’m not sure.

I think what we can learn from the episode is to put more trust in the person, and the leadership-taking people, who lead the next product developed the way the N9 was developed. Give those people more time onstage at open source conferences.

I’m also sick and tired of Free Software being inefficient and self-destructive due to internal schism. It’s one of the reasons why I’m not working much on Free Software nowadays. As I’m not much of a leader myself, I silently hope some local leader would change this. Maybe somebody at Digia? Jolla? If I can help, let me know.

Curiosity

Up early to follow EDL of Curiosity. Follow it live here. Go NASA!

Edit ‘We are on Mars again. Photo of a wheel and a shadow of the rover’:

How I think companies like Jolla should do it

I’ll focus on the technical stuff; I think I would only Peter Principle myself if I would try giving management advice.

What I’ve seen too much are community projects, companies or groups who think that the synchronization of Harmattan with Moblin or MeeGo was done well to make what is now the OS on the N9. Luckily is Jolla hiring Harmattan staff, so they understand the situation.

For me it was always clear that “MeeGo” was a more or less failed PR thing between Intel and Nokia. By the time the N9 was first released wasn’t Harmattan synchronized with Moblin or MeeGo technically very much. And after several updates of Harmattan it still isn’t.

The situation on the N9 now is an OS that has relatively few technical resemblance with “MeeGo”. For me is N9’s software Harmattan or Maemo 6. It’s the continuation of the software on the N900: Maemo 5 or Fremantle (after ~ two or three rather big rewrites, that much is true). That the rewrites happened doesn’t mean that during those rewrites Harmattan suddenly became MeeGo. MeeGo is, in other words, a different platform.

A successful project will have to work with what Harmattan is, and not try to replace it with what MeeGo is today. If they do want to end up with “MeeGo” on an N9 they will have to progressively improve Harmattan towards that goal by for example asking Nokia to open closed components, by developing fixes for softwares that are already open source (a lot are), by repackaging them and by explaining N9 owners how to add a repository and how to upgrade their phone safely.

I understand the idea isn’t to deploy on an N9, but if you want a new phone or device that resembles what the N9 is; the N9’s software is in my opinion not MeeGo but Harmattan. Rewrites have happened too often already. It’s my opinion that yet another rewrite of Harmattan isn’t a good idea at all.

For example replacing the Debian package management system with RPM doesn’t sound like a viable option to me at all. Nor is replacing any of the major middleware really doable within the timeframe you’d have to deliver to be relevant.

Instead software project per software project improve the phone’s OS. Kinda like how Ximian did Red Carpet many years ago (which also supported multiple package management systems).

No more big rewrites, no more starting from scratch. No more politics about how it should have been done. Start with the platform as it is. There are reasons why the OS is good, and among the reasons is that good middleware choices and compromises were made.

Kind regards, good luck.

Battery drain on N9 caused by a combination of Battery-Icon, Tracker and Smartsearch

Tired of the fact that my N9 had few battery time I decided to “as a developer” investigate my device a little bit. Last time I did that I was still contracted by Nokia and a few days later I had to fly to Helsinki to help fix a Tracker in combination with contactsd bug. I’m btw. no longer working for Nokia since a few months. So this time I can’t fix it for everyone. Lemme write it here instead.

It’s pretty funny what is going on: I installed Battery-Icon at some point. The software is writing periodically to /usr/share/applications/battery-icon.desktop. Having been a developer at Nokia for the metadata subsystem I know that tracker-miner-fs will reindex .desktop files that change. You don’t really need to be a developer to know that: Tracker’s FS miner is, among other things, responsible for keeping up to date a list of known applications.

Because of Battery-Icon, which people are probably installing to monitor their battery, tracker-miner-fs wakes up to update the metadata. That in turn wakes up tracker-store to store the metadata. That in turn wakes up smartsearch which will fetch from Tracker some textual data. All three will consume power periodically because of this .desktop file write trigger. I’m guessing the power consumption is triggering Battery-Icon to update the .desktop file. And circular power consumption was born.

I guess I should file a bug on Battery-Icon and tell its author to update the .desktop file less often. I think he could for example wait ten minutes before doing that write. Or is the user really interested in accurate battery information each and every second? Looks like Battery-Icon is even writing to the file more frequent every hour. Interesting behavior for a tool monitoring battery to do things in a way that influences power consumption significantly.

Btw, while it’s not fixed: devel-su (enable developer mode, install terminal and password for devel-su is rootme) on your N9 and chmod -x /usr/bin/smartsearch, reboot, then uninstall Battery-Icon and your battery will last longer. I know the guys who were or are on the smartsearch team are going to hate me for that advice. Sorry guys.

Avoiding duplicate album art storage on the N9

At Tracker (core component of Nokia N9‘s MeeGo Harmattan’s Content Framework) we extract album art out of music files like MP3s, and we do a heuristic scan in the same directory of the music files for files like cover.jpg.

Right now we use the media art storage spec which we at a Boston Summit a few years ago, together with the Banshee guys, came up with. This specification allows for artist + album media art.

This is a bit problematic now on the N9 because (embedded) album art is getting increasingly bigger. We’ve seen music stores with album art of up to 2MB. The storage space for this kind of data isn’t unlimited on the device. In particular is it a problem that for an album with say 20 songs by 20 different artists, with each having embedded album art, 20 times the same album art is stored. Just each time for a different artist-album combination.

To fix this we’re working on a solution that compares the MD5 of the image data of the file album-md5(space)-md5(album).jpg with the MD5 of the image data of the file album-md5(artist)-md5(album).jpg. If the contents are the same we will make a symlink from the latter to the former instead of creating a normal new album art file.

When none exist yet, we first make album-md5(space)-md5(album).jpg and then symlink album-md5(artist)-md5(album).jpg to it. And when the contents aren’t the same we create a normal file called album-md5(artist)-md5(album).jpg.

Consumers of the album art can now choose between using a space for artist if they are only interested in ‘just album’ album art, or filling in both artist and album for artist-album album art.

This is a first idea to solve this issue, we have some other ideas in mind for in case this solution comes with unexpected problems.

I usually blog about unfinished stuff. Also this time. You can find the work in progress here.

Null support for INSERT OR REPLACE available in master

About

Last week I wrote about adding a feature to our SPARQL Update’s INSERT OR REPLACE. With that feature it’s not needed to put a DELETE upfront the INSERT to clear a field. This makes our SPARQL-ish INSERT OR REPLACE in some ways more powerful than SQL’s UPDATE. Note, however, that all of INSERT OR REPLACE is non-standard in the SPARQL language. And this new null support certainly isn’t.

Support for null with INSERT OR REPLACE is now available in Tracker‘s master branch. How to use it is illustrated in the functional test. I’ll briefly explain the test.

For single value properties:

This is of course very simple.

INSERT { <subject> nie:title 'new test' }

INSERT OR REPLACE { <subject> nie:title null }

If you now select nie:title for <subject> then of course you’ll get that its nie:title field is unset.

For multi value properties:

Begin situation:

INSERT { <subject> a nie:DataObject, nie:InformationElement }

INSERT { <ds1> a nie:DataSource }

INSERT { <ds2> a nie:DataSource }

INSERT { <ds3> a nie:DataSource }

INSERT { <subject> nie:dataSource <ds1>, <ds2>, <ds3> }

This will be the test query I’ll use for all cases:

SELECT ?ds WHERE { <subject> nie:dataSource ?ds }

For the begin situation that of course gives us <ds1>, <ds2> and <ds3>.

With null upfront, reset of list, rewrite of new list:

INSERT OR REPLACE { <subject> nie:dataSource null, <ds1>, <ds2> }

This will give us <ds1> and <ds2> for the test query. The first null resets the existing list, then <ds1> and <ds2> are added. This is probably the most sensible one to use for multi value properties.

With null in the middle, rewrite of new list:

INSERT OR REPLACE { <subject> nie:dataSource <ds1>, null, <ds2>, <ds3> }

This also gives us <ds2> and <ds3>. First <ds1> is added, but the null that follows clears it again. Then <ds2> and <ds3> get added. So the <ds1> there doesn’t make much sense, indeed.

With null at the end:

INSERT OR REPLACE { <subject> nie:dataSource <ds1>, <ds2>, <ds3>, null }

This one doesn’t make much sense either. The <ds1>, <ds2> and <ds3> get cleared by the null at the end. So the query gives us zero results.

With null as only element:

INSERT OR REPLACE { <subject> nie:dataSource null }

This one makes sense, you can use it to clear a multi value property of a resource. The query gives us zero results.

Multiple nulls:

INSERT OR REPLACE { <subject> nie:dataSource null, <ds1>, null, <ds2>, <ds3> }

Again doesn’t make much sense. First the list is cleared, then <ds1> is added, then it’s again cleared, then <ds2> and <ds3> are added. So the query gives <ds2> and <ds3>.

Support for null with Tracker’s INSERT OR REPLACE feature.

I believe it was the QtContacts Tracker team who requested this feature. When they have to unset the value of a resource’s property and at the same time set a bunch of other properties, they need to use a DELETE statement upfront an INSERT OR REPLACE. The DELETE increases the amount of queries and introduces a SQL SELECT internally for solving the SPARQL DELETE’s WHERE.

Instead of that they wanted a way to express this in the INSERT OR REPLACE, and that way gain a bit of performance. Today I implemented this.

So let’s say we start with:

INSERT { <subject> a nie:InformationElement ; nie:title 'test' }

And then we replace the nie:title:

INSERT OR REPLACE { <subject> nie:title 'new test' }

Then of course we get ‘new test’ for the nie:title of the resource:

SELECT ?title { <subject> nie:title ?title }

Then let’s say we want to unset the nie:title, we can either use:

DELETE { <subject> nie:title ?title } WHERE { <subject> nie:title ?title }

or we can now also use this (and avoid an extra internal SQL SELECT to solve the SPARQL DELETE’s WHERE):

INSERT OR REPLACE { <subject> nie:title null }

For multi value properties will a null object in INSERT OR REPLACE results in a reset of the entire list of objects. There is still a SQL SELECT happening internally to get the so called old values, but that one is sort of unavoidable and is also used by a normal DELETE. I hope this feature helps the QtContacts Tracker team gain performance for their contact synchronization use cases.

You can find this in a branch, it might take some time before it reaches master as most of the Tracker team is at the Berlin Desktop Summit; it must be reviewed, of course. Since it doesn’t really change any of the existing APIs, as it only adds a feature, we might also bring it to 0.10. Although now that we started with 0.11, I think it probably belongs in 0.11 only. Distributions should probably just upgrade, wait for the new features until they decide to bump the version of their packages, or backport features themselves.